Fazeshift is an AI-native AR automation platform that replaces manual invoice-to-cash workflows with autonomous agents, purpose-built for traditional industries with deep integrations spanning staffing, services, wholesale, construction and beyond. The platform transforms traditionally time-consuming AR processes into streamlined, agentic operations, freeing finance teams from mundane, repetitive, and manual tasks, increasing efficiency and improving cash flow: lower DSO, reduced outstanding AR, and a direct impact on EBITDA.

Sector: Technology

Jon Gelsey

Jon Gelsey is a board member at AI supercomputer company Elastix.ai, data security company Symmetry Systems, and .net identity company Duende Software. Previously he was CEO of Xnor.ai, a computer vision and machine learning spinoff of the Allen Institute for AI and UW that Apple acquired in January 2020 for a publicly reported $200M. Before Xnor.ai, he was founding CEO of Auth0 Inc., an industry‐leading identity‐as‐a‐service platform, which he grew from 3 employees to nearly 300 over the course of four years. Auth0 was acquired by Okta in February 2021 for $6.5B, the largest-ever private transaction in Seattle history. His previous experience includes responsibility for acquisitions and investments at Microsoft and being a venture investor at Intel Capital. Jon started his career as a supercomputer designer at Convex Computer, which was acquired by Hewlett‐Packard in 1995 to become the high end of their server product line.

Robotics Report: China Edition

2026 State of Robotics Report

The robotics investment market continues at its torrid pace. Investment in 2025 was at an all time high, public companies continue to outperform the market, and exits are slowly picking up. Led by the growth in General Purpose Robotics and Defense, the excitement and momentum is palpable. The future of robotics is more exciting than ever!

We invite you to download the report here, and reach out to authors Sanjay Aggarwal and Betsy Mulé.

In Defense of Software. In Defense of Humans.

Last year I wrote a post explaining why vertical SaaS companies could win against the core foundation model providers. A few months later, that argument feels quaint. Anthropic has shipped Opus 4.5 and Claude Cowork with enterprise plugins for finance, engineering, and HR. OpenAI launched Codex. The foundation model providers are not just building models anymore — they are building products…and products that build products.

Arguing against them increasingly feels like shouting from the castle ramparts while the barbarian hordes storm the walls.

And yet. The argument that the foundation model providers will not win everywhere still holds for me. Focus and specialization still matter and are dispositive.

But that is no longer the right question.

The new central question is whether vertical AI vendors can win against the rest of the world armed with powerful, general-purpose agents. You can outrun three to five giants, but can you do the same against an army of weaponized customers and developers?

Nicolas Bustamante, the founder of Fintool and co-founder of Doctrine, recently made the case that LLMs are systematically dismantling the moats that once justified vertical software’s premium multiples. If you do not have proprietary data that cannot be scraped or synthesized, regulatory lock-in, or network effects, then general-purpose reasoning agents will eat your lunch. It’s a great synthesis, and every vertical player should take it to heart.

I agree that the best vertical startups will have those moats. But a lot of enterprise software will be bought from startups that do not fit neatly into that framework, and some will be enduring businesses.

Here is why — and the answer has everything to do with where the barbarians actually are.

The Threat Is Inside the Building

The conversation about AI disrupting software usually imagines the threat coming from outside. But the more immediate and chaotic threat comes from inside the enterprise itself. General-purpose agents are now powerful enough that anyone in an organization can build one. A marketing manager spins up an agent that pulls customer data. A junior analyst automates a reporting workflow without anyone reviewing the logic. Three departments independently build agents that interact with the same CRM, creating conflicts no one anticipated.

For an enterprise, this is both beautiful and frightening. For CISOs, CFOs, and Chief Risk Officers, it’s mostly frightening. They will not sign off on a world where hundreds of ungoverned agents run loose across the organization.

And that is exactly why enterprises will buy software — not just to do the work, but to impose the structure, governance, and coherence that makes AI-powered work safe and reliable at organizational scale. The barbarians are not just at the gates. They are inside the building. And that is the problem enterprise software has always existed to solve.

The Answer: Packaging Complexity

Enterprises do not buy features. They buy solutions — and solutions require design, orchestration, governance, security, and accountability. Capability alone is not a deployable enterprise solution.

#1 Design addresses how humans and agents interact. It is paramount yet uncharted territory. SaaS codified enterprise processes into software. But one of the most profound changes coming with AI is that enterprises will move from buying automation to buying outcomes. Those who argue that workflow is dead are directionally correct, but incomplete. In its place, we will need well-designed agentic workflows that manage the handoffs between agents and humans — and those workflows will be reimagined from scratch around outcomes.

It’s early. Most startups are still wedging in with discrete task automation tackling the jobs to be done. That is smart for now and an easier sale to enterprises, but ultimately not enough. The saying “your mess for less” was common in Business Process Outsourcing (BPO) because BPO firms rarely re-designed and improved enterprise processes. Inserting Agent X for this task and Agent Y for that one, but doing the job the same way, will not create long-term winners.

Instead, AI startups need to begin with an outcome-design mindset. Consider commercial lending. A startup thinking in terms of task replacement would first gather all the borrower data, then have an agent automate the financial spreading and wait for a human to review, and finally have an agent create an underwriting memo and wait for a human to edit. A design built around outcomes will take advantage of the 24×7, scalable agent capabilities and create a real-time, iterative conversation with the borrower. Agents could request data as needed to qualify borrowers, show the borrowers how the lender will model their credit worthiness and match them with loan products, and allow the borrower to change variables like loan rate, points, or term. At any point, the borrower could request to speak with a loan officer, and when they do, the agents would prepare the loan officer with notes, recommendations, best next steps, etc. If this saves the loan officer 10 hours per loan, all of that can be spent on relationship building, cross-selling, and ensuring the customer gets the most value from the lender relationship.

Design will be the most durable form of domain advantage. General-purpose agents will probably, eventually learn every domain. An agent can learn the rules of commercial lending or insurance underwriting. But knowing the domain is not the same as knowing how to redesign the work. Designing the right interaction between agents and humans — where to automate, where to hand off, where to keep a human in the loop not for compliance but because it genuinely produces a better outcome — requires the kind of judgment that only comes from deep, sustained focus on a problem space. That’s not a knowledge gap. It’s a design gap.

Get the agent-human interaction design right, and you will have an early advantage that compounds. Reflecting on the birth of ecommerce, Amazon nailed the checkout flow while countless merchants had obtuse, high-friction checkouts. The underlying capability was the same, but Amazon’s design of the end-to-end experience created an early competitive advantage that compounded. Seems obvious now, but it was not at the time.

#2 Orchestration is about how agents work with each other. Any meaningful enterprise process involves multiple agents — one that extracts data, another that analyzes it, another that drafts a communication, another that checks compliance. Someone must decide the architecture: which agents manage the workflow, when to use specialized agents, and when to invoke a skill versus spinning up a separate agent. Think of it like staffing an investment team — you need a leader who knows when to pull in the tax expert, the industry analyst, or outside counsel, in what order, with what context passed along. Knowing the industry vertical and the function is critical to optimizing specialist agents vs. general-purpose agents, pre-built integrations vs. as-needed API calls, and agents vs. humans. All of this will affect cost, reliability, and speed.

#3 Governance means the policies, approvals, and controls that determine who can deploy an agent, what it is allowed to do, what happens when they are duplicative, how decisions are reviewed, and the imposition of security and data requirements. In financial services, a model that generates investment recommendations may need to be validated, documented, and approved before it goes live. Some payments may be initiated by agents; others require human approval. In healthcare, an agent might read the radiology report, and even provide the results, yet not have authority to order prescriptions.

#4 Security in the enterprise is table stakes. It means data isolation, access controls, audit trails, and compliance with industry-specific regulations like HIPAA, SOX, or GDPR. A general-purpose agent can be powerful, but an enterprise buyer needs to know exactly what data it can access, who can see its outputs, and how to prove that to a regulator. Security may not strongly favor vertical software over foundation model providers in all industries, but it can in industries with industry-specific regulations like healthcare, financial services, and public sector.

#5 Accountability means having a throat to choke. When an agent makes an error that costs money or creates risk, enterprises need a vendor who owns the outcome. They need SLAs, incident response, and a product team that understands the domain well enough to diagnose what went wrong. A general-purpose agent does not come with a customer success team that knows your industry.

The companies that package all this together — the design, orchestration, security, governance, and accountability — are building something that a general-purpose agent with plugins simply does not replicate. Packaging complexity is a real and enduring source of value.

Vertical Software Players Can Succeed, But the Race Is On

This case for packaging complexity IS the defense of software, and especially of vertical software that brings domain knowledge to every decision. However, it’s not yet clear how enterprises will buy and deploy all this needed governance, security, and accountability.

Three categories of players are competing for the enterprise AI stack.

Foundation model providers. Anthropic, OpenAI, Google are solidly individual productivity tools today, but the trajectory makes clear they are moving towards this orchestration layer. Anthropic shipped Cowork in January, added plugins two weeks later, then added enterprise connectors, private plugin marketplaces, and admin controls two weeks after that. And the February launch explicitly featured orchestration across Excel and PowerPoint — context flowing between tools, not just a human chatting with an agent. The pace is extraordinary and moving toward the orchestration layer. What keeps them from being the default orchestration layer – risk of model lock-in for one. But some buyers will accept that.

Purpose-built horizontal orchestration platforms. Stack AI, Thread AI, Copilot Studio posit the enterprise wants a single neutral orchestration layer across all business functions: visual workflow builders, multi-agent coordination, governance dashboards, deployment infrastructure. This looks a lot like how enterprises have bought for decades, and frankly it is hard to imagine not having some layer like this because enterprises need to manage complexity across all their functions.

Vertical software vendors — Harvey in legal, Fazeshift in accounts receivable, Abridge in healthcare — these kinds of players own the domain expertise, workflow design, and customer relationship for a specific function. This is the category that needs to change the most and get the packaging right to survive. The best will absorb security, governance, and orchestration into their own products because their customers will demand it.

Realistically, all three will find buyers in the enterprise along lines of size/scale and technical sophistication. JPMorgan will build a lot more software in-house than it did before because the cost of doing so will fall. They will absolutely have their own horizontal orchestration platform. Small and midsize companies like a community bank or domestic manufacturer will buy a lot of individual vertical software products and need orchestration built in.

These are genuinely open architectural questions. What I believe is that vertical vendors are best positioned to solve the hardest part: designing and packaging the domain-specific work that produces outcomes. Whether they build, buy, or integrate the horizontal infrastructure is a strategic question each will answer differently. But domain expertise comes first, and that is not something a horizontal platform or foundation model can easily replicate.

Conclusion

Making the case for vertical startups may look crazy right now. That’s fine. The foundation model providers are building governance, orchestration, and enterprise packaging at remarkable speed, and the window for startups is not infinite. But the high-probability scenario is that the greatest problem to be solved is the hard, domain-specific work of designing how agents and humans should interact, how agents work with each other, and how all of it operates safely within the constraints of a real enterprise. That work favors the focused over the general. The opportunity is decades long, but the window to establish yourself is right now.

Kendra Ryan

Kendra Ryan joined F-Prime in 2026 as a Director of Ecosystem Network. She focuses on nurturing and expanding the firm’s executive talent and advisory networks across AI, fintech, and enterprise software. Prior to F-Prime, she was a Director at SPMB Executive Search where she led executive searches across the technology ecosystem.

In addition to her role at F-Prime, Kendra serves as an Advisory Board Member for the Best Buddies San Francisco Chapter, a nonprofit dedicated to ending the social, physical, and economic isolation of people with intellectual and development disabilities.

Kendra graduated from the University of Southern California with a degree in Neuroscience.

Liam Maniscalco

Liam is an Associate at F-Prime, where he focuses on early-stage investments in enterprise software and frontier technologies. Prior to joining F-Prime, he was a consultant at Bain & Company in the Private Equity Group, working with leading private and growth equity firms on software commercial due diligences.

Liam is a graduate of Swarthmore College, where he received degrees in Economics and Political Science, and was a student-athlete.

Jump

Jump is an AI assistant for wealth management, enabling financial advisors to automate workflows prior to, during, and after client-facing meetings. Using Jump, advisors can save up to 20 hours per week by automating administrative tasks like meeting prep, note-taking, and email follow-up.

Monark Markets

Monark Markets provides “Alts-As-A-Service” infrastructure to brokerage firms and wealth management platforms. Monark’s APIs enable embedded access to private markets from within partners’ existing trading platforms.

The State of Fintech in 2026

It’s here! All subscribers to Fintech Prime Time can access the full 2026 State of Fintech report via the F-Prime Fintech Index.

But first, save your spot with the F-Prime team for a virtual presentation and discussion of our findings on Tuesday, February 24 at 12pm ET / 9am PT.

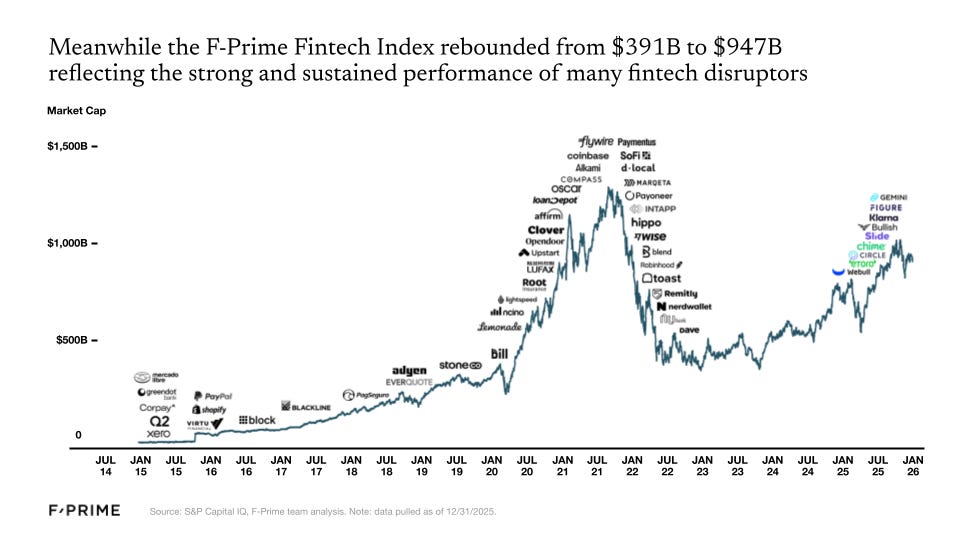

The fintech industry has experienced its ups and downs over the last five years. In 2021, the F-Prime Fintech Index market cap rose to $1.3T, followed by a swift correction in 2022 when the Index bottomed out below $400B. The effects of that correction lingered into 2023, but started a slow and steady rebound in 2024. By the end of 2025, the F-Prime Fintech Index was almost back to $1T.

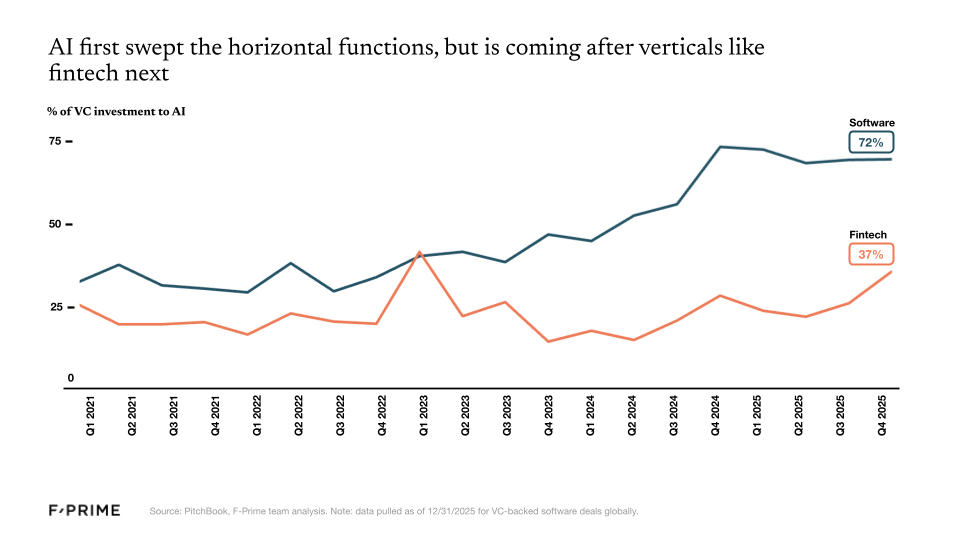

At the same time, 2025 was the year we could definitively say three things. First, the fintech investments of the last decade have produced multiple new industry giants that lead in their respective categories — Nubank, Affirm, Stripe, Toast, and Robinhood, to name a few. Second, crypto has earned its seat next to traditional finance (TradFi). We expand on both these points in the State of Fintech report. Finally, 2025 was not the year of AI in financial services, at least relative to its early adoption in other industries and functions like coding, customer service, and legal. However, it is coming quickly and we anticipate future State of Fintech reports will show a lot more adoption.

The first months of 2026 brought sharper market discipline than many expected, eliminating over 80% of the Fintech Index market cap gain between year-end 2024 and 2025. Despite the Q1 2026 sell-off, we believe financial services providers will ultimately benefit more from AI than be disrupted by it. The outlook is less forgiving for legacy technology vendors serving financial institutions, many of whom risk being displaced by native agentic architectures. For now, however, public markets appear to be painting the sector with a broad brush.

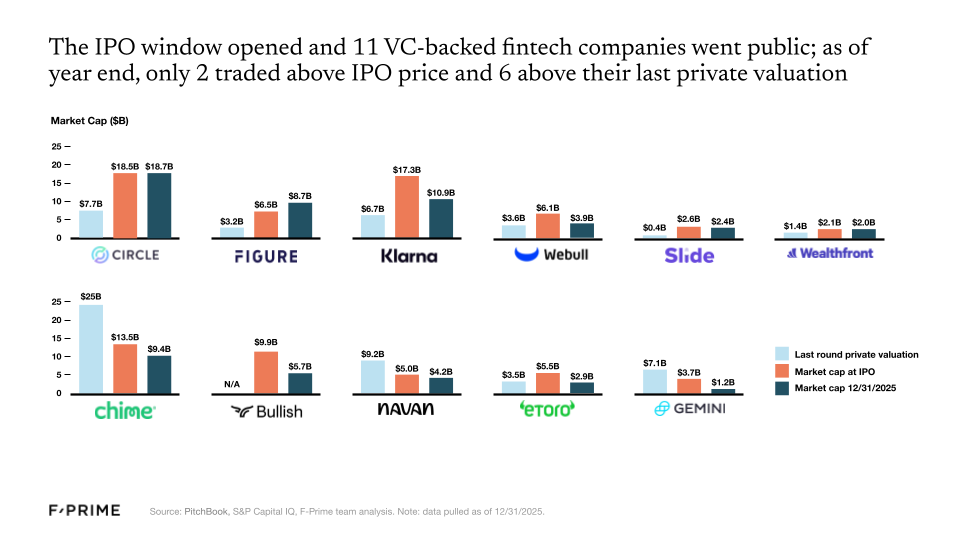

A Thaw in Public Fintech Markets

16 fintech companies went public in 2025, 11 of which were VC-backed. Despite subpar performance for many of these companies in the public markets (as of 12/31/2025 only two traded above their IPO price, and six traded above their last private round valuation), the IPO window is officially open. More public listings are on their way — already three more in 2026!. Meanwhile, fintech M&As are showing even greater signs of health, rebounding to pre-2021 levels.

Revenue multiples also continue to rise — over the last two years, investors have prioritized so-called “goldilocks” companies that are neither growing too fast nor too slow while approaching profitability. As for the companies comprising the F-Prime Fintech Index, fundamentals continue to strengthen. They grew at an average of 29% over the last year, with every sector seeing meaningful increases in net income margins since the growth-at-all-costs mindset that characterized the 2021 peak.

A New Generation of Financial Services Giants

The last 15 years have produced new industry heavyweights. Much like Uber, PayPal, and Square were initially dismissed yet came to lead their respective industries, so too have companies like Nubank, Affirm, Stripe, Toast, and Robinhood become leaders in theirs.

If measured against US standards, Revolut, SoFi, and Nubank would now rank in the top 1.5% of American banks if they were chartered in the US. Each has nearly $30B in deposits. In payments, Stripe and Adyen were tied for fifth place in the list of top global merchant acquirers, each with around $1.4T in TPV, while Toast processes an estimated 15% of the restaurant industry’s payment volume.

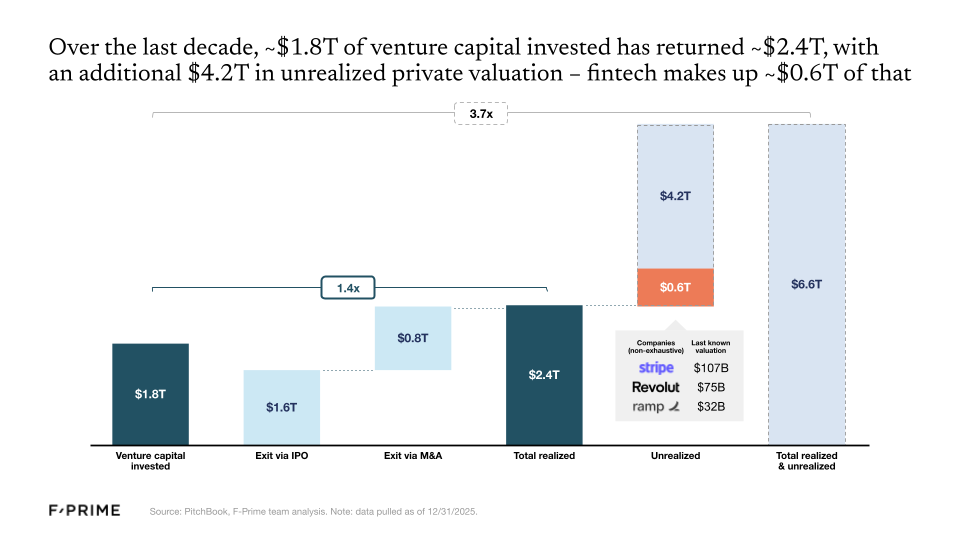

So the fintech wave of the 2010s has now officially produced its first generation of giants, but there are many others still waiting in the wings. Roughly $1.8T of venture capital has been invested in the category over the last decade, returning an estimated $2.4T. But $4.2T remains locked up in innovative private companies, with fintech making up around $0.6T of that total, including some of the most valuable fintech companies like Stripe ($107B), Revolut ($75B), and Ramp ($32B).

Crypto Grows Up

As of 2025, we can officially say that the crypto industry has earned a front-row seat alongside TradFi, crossing a number of thresholds that show real integration with the broader economy. For starters, issuers like Blackrock and Fidelity contributed to a total of more than 75 new crypto ETFs launched in 2025. This marks a structural shift in the makeup of the crypto market. At the same time, regulators’ posture towards crypto meaningfully shifted in 2025, paving the way for further institutional adoption moving forward.

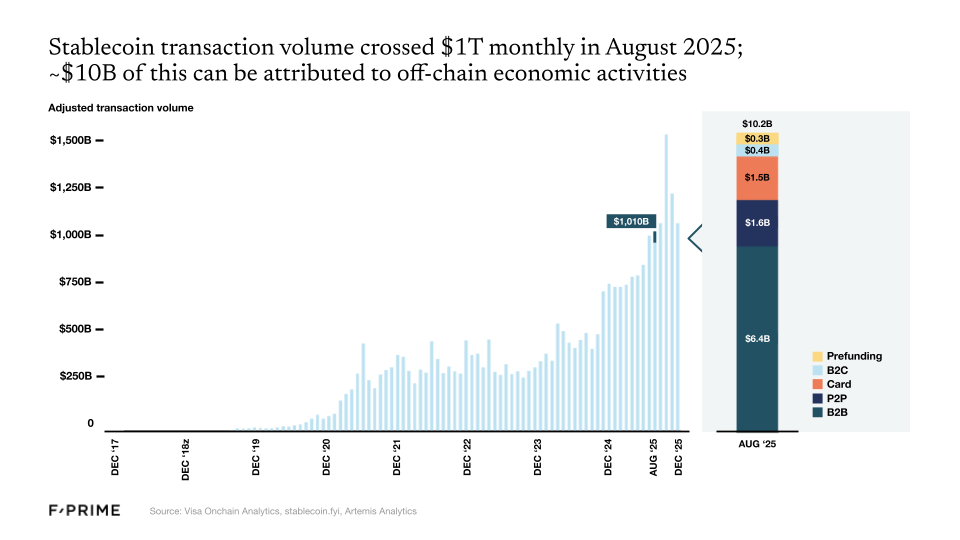

And then there are stablecoins, which crossed $1T in monthly volume in 2025. Stablecoins may be the best example of a “killer use case” in crypto. Stablecoins could reduce the cost of remitting $200 from $20-30 via bank transfer to less than $1.

Following the initial adoption of stablecoins and tokenized treasuries, we can now wonder whether any financial asset will not be tokenized in the next 10 years. The next few years will see an expansion of tokenization across a wider spectrum of asset classes, including real estate, private credit, and other private funds.

AI Has Not Transformed Fintech (Yet)

There has been a lot of hype, but 2025 was not the year of AI in fintech. For now it remains a huge, mostly untapped opportunity — financial services is responsible for more than 20% of GDP in the US, but the industry currently has one of the lowest adoption rates for AI agents.

We knew that financial services would lag behind other industries, and for good reason. Accelerated AI adoption works for industries where:

- Context is text-heavy instead of numbers-heavy,

- Existing systems of record are easy to integrate with,

- Stakes are relatively low and imprecise values are still valuable, and

- There is low regulatory exposure.

Financial services strike out on most of these points.

In the broader enterprise software space, nearly three quarters of every dollar invested now goes to AI companies. In the fintech vertical, that number is closer to one third. Since the launch of ChatGPT, fintech has produced a lower percentage of unicorn companies, and those that reach unicorn status are usually not AI-native.

However, we know that financial services is a worthy vertical for AI to tackle. The large models are already building for financial services — OpenAI in payments, Anthropic in financial research — but we believe startups can differentiate on workflow, integrations, and domain knowledge.

By the end of 2025, the primitives for nearly every sector of fintech have been put in place, and they are now ready for a new AI-native application layer to be built on top. We expect the coming years to be exciting and critical ones for AI in financial services and commerce, and it’s time to put the next generation of building blocks in place.

We’ve Never Been More Excited for the Future of Financial Services

If you’re as passionate about fintech as we are, there are so many reasons for excitement.

The regulatory landscape has never been more open to crypto innovation and adoption, and stablecoins are revolutionizing the way money flows around the world. Crypto ETFs are unlocking new pools of capital, and tokenization promises to create a more efficient infrastructure for all asset classes.

It’s still early days for AI in fintech, but the technology is already redesigning the way financial services businesses underwrite risk, design products, allocate capital, and serve their customers. And that’s before we consider AI’s role in determining how consumers earn and save, spend and pay, borrow and build wealth.

The last decade forged the next generation of great financial services companies, and AI is going to create the next.

Go deeper: Access the full report via the F-Prime Fintech Index here.