Archives: Blog

Blog posts

Behind the Breakthrough: Q&A with Scott Bratman, Chief Innovation Officer of Adela

For more than a decade, Scott Bratman has worked at the forefront of liquid biopsy innovation – developing noninvasive approaches to cancer detection that rely on a simple tube of blood.

Bratman’s research began at Stanford University, where first-generation tests focused on identifying mutations in circulating DNA. These were revolutionary at the time, offering a new window into advanced cancer biology, but the tests struggled with early detection and provided limited tissue specificity.

Bratman joined forces at the University of Toronto with Dr. Daniel De Carvalho, now CSO of Adela, , at University Health Network in Toronto to explore a more powerful biomarker: DNA methylation. Unlike mutations, methylation patterns offer a broader and more contextual signal—capturing not just the presence of cancer, but clues about where it originates and how it evolves. Their collaboration produced a breakthrough: a test capable of picking up on multiple early-stage cancers that became the foundation for Adela. Adela’s proprietary approach avoids chemical damage to DNA, allowing for a new generation of high-resolution diagnostics. Bratman shares how this technology moved from bench to bedside and is helping to usher in a new era for cancer diagnostics.

You started in cancer diagnostics during your training. What led you to found Adela?

I am a clinician scientist with a background in oncology and have worked in the liquid biopsy field for the past 15 years. My training began at Stanford University, where I studied approaches to detect cancer in the bloodstream. I later established a research lab in Toronto, where I partnered with Adela’s CSO Daniel De Carvalho, to address the limitations of first-generation liquid biopsy tests. Our work focused on leveraging DNA methylation to improve detection performance, laying the scientific foundation for the founding of Adela.

Was there a specific “a-ha moment” where you knew you had found something viable as a company moving forward?

Yes. In those early days of liquid biopsy, we could basically detect advanced cancers and find specific mutations linked to resistance or sensitivity to targeted therapies. That was a breakthrough back then, but we lacked the tools to address the full spectrum of cancer, particularly early detection and molecular residual disease after treatment. We focused our efforts on unlocking new technologies using DNA methylation to expand the clinical utility of liquid biopsy.

The “a-ha” moment came from early work in our labs. We saw the potential for detecting not just one, but seven different cancers at early stages from a small blood sample and with a single

test. That was unprecedented at the time. It got us thinking about how far this could go in cancer diagnostics across the full spectrum of disease.

Why methylation patterns? Why are they such powerful biomarkers for cancer?

DNA methylation underlies the development of cells at the earliest stages of differentiation into tissues. Dysregulation of this process can lead to cancers. Because we see this across cancer types and tissue types, DNA methylation in the blood can be used to detect the presence of cancer and identify which tissues are shedding DNA into the bloodstream.

Prior to this, most work focused on DNA mutations, which don’t have that same tissue-type specificity. The challenge historically was that profiling methylation required chemical treatments that degraded the DNA, resulting in a lower signal-to-noise ratio. The platform technology we developed focused on preserving that precious material within a small tube of blood so we could analyze it accordingly.

What makes your approach distinct from peers in your field?

What makes our approach unique is that we use a signal-preserving assay. We do not degrade DNA; instead, we simultaneously enrich the informative, methylated regions within a blood sample and target those for sequencing and analysis.

By having a single assay platform, we can generate data that feeds into analytical models for machine learning to develop signatures for different diseases. We think of it as an engine. We maintain a single platform, derive insights from every sample, and feed a continuous learning machine that gives rise to different products. That is a massive differentiator compared to fixed panels or specific biomarkers.

Adela originally focused on multi-cancer early detection (MCED), but molecular residual disease (MRD) has been a major emphasis recently. How are you balancing those ambitions?

We believe our platform has broad potential across all areas of cancer diagnostics, but we have chosen to strategically focus on applications with strong demand and immediate clinical utility. MRD represents a particularly tangible, near-term opportunity, as it pertains to patients who already have a cancer diagnosis and require rapid, informed clinical decision-making.

Today, therapy response is primarily monitored using medical imaging, such as CT or MRI scans, which have limited sensitivity, are expensive, and often require patients traveling to specialized centers. A blood test that can complement or even replace standard imaging offers a powerful advantage for both patients and physicians.

You mentioned patients traveling to specialized centers. Can you talk about the impact this technology could have on accessibility?

This technology was invented in Canada, a vast country where most of the population is concentrated in a few cities, leaving large regions with sparsely populated communities. These communities often have poor access to specialized medical imaging. Improving accessibility is fundamental to the origin story of Adela, as we believe a blood test can bring advanced cancer management closer to patients in these disparate communities.

Another key challenge is “tissue accessibility.” Many existing MRD tests require a tumor tissue specimen, adding time, cost, and often making testing impossible for patients without sufficient tumor material. For example, in head and neck cancer, over a third of patients are estimated to lack sufficient tissue for these tests. A tissue-free test removes this barrier, enabling accessible cancer management for a much broader patient population.

What are some of the most important lessons that you’ve learned building a company in this space?

Given my background as a researcher and technology inventor, I have sometimes been at risk of tunnel vision. Early on in founding Adela, I quickly realized the importance of partnering with people whose expertise complemented my own. People who had successfully brought products to market and navigated the complexities of fundraising in a competitive space such as cancer diagnostics. I have learned a tremendous amount from mentors and partners along the way during this journey with Adela. Building the right team from the outset created a foundation that allows us to keep patients at the center of what we do.

What is the roadmap for the next 12 to 24 months?

We are very excited about the upcoming launch of our MRD test for use in head and neck cancer, which addresses a significant unmet need in monitoring for recurrence in patients who have completed treatment. Building on that momentum, we recently had a publication accepted on immunotherapy response monitoring. This second product is particularly important because it shows the continuity of our platform across both early-stage and more advanced-stage disease.

We plan to launch the immunotherapy product next, while continuing to advance MRD development for other indications. Simultaneously, we have a large multi-cancer early detection study underway, and we look forward to generating more data in that space as well.

What advice would you offer to others looking to translate a basic discovery into real-world impact?

Technology today is powerful, with seemingly limitless opportunities to push the envelope of what is possible. But at the end of the day, you must ask: What do the data mean for the patient? How does it improve the partnership between the physician and the health system? These questions serve as our guiding light in the development of every test we create.

2026 State of Robotics Report

The robotics investment market continues at its torrid pace. Investment in 2025 was at an all time high, public companies continue to outperform the market, and exits are slowly picking up. Led by the growth in General Purpose Robotics and Defense, the excitement and momentum is palpable. The future of robotics is more exciting than ever!

We invite you to download the report here, and reach out to authors Sanjay Aggarwal and Betsy Mulé.

In Defense of Software. In Defense of Humans.

Last year I wrote a post explaining why vertical SaaS companies could win against the core foundation model providers. A few months later, that argument feels quaint. Anthropic has shipped Opus 4.5 and Claude Cowork with enterprise plugins for finance, engineering, and HR. OpenAI launched Codex. The foundation model providers are not just building models anymore — they are building products…and products that build products.

Arguing against them increasingly feels like shouting from the castle ramparts while the barbarian hordes storm the walls.

And yet. The argument that the foundation model providers will not win everywhere still holds for me. Focus and specialization still matter and are dispositive.

But that is no longer the right question.

The new central question is whether vertical AI vendors can win against the rest of the world armed with powerful, general-purpose agents. You can outrun three to five giants, but can you do the same against an army of weaponized customers and developers?

Nicolas Bustamante, the founder of Fintool and co-founder of Doctrine, recently made the case that LLMs are systematically dismantling the moats that once justified vertical software’s premium multiples. If you do not have proprietary data that cannot be scraped or synthesized, regulatory lock-in, or network effects, then general-purpose reasoning agents will eat your lunch. It’s a great synthesis, and every vertical player should take it to heart.

I agree that the best vertical startups will have those moats. But a lot of enterprise software will be bought from startups that do not fit neatly into that framework, and some will be enduring businesses.

Here is why — and the answer has everything to do with where the barbarians actually are.

The Threat Is Inside the Building

The conversation about AI disrupting software usually imagines the threat coming from outside. But the more immediate and chaotic threat comes from inside the enterprise itself. General-purpose agents are now powerful enough that anyone in an organization can build one. A marketing manager spins up an agent that pulls customer data. A junior analyst automates a reporting workflow without anyone reviewing the logic. Three departments independently build agents that interact with the same CRM, creating conflicts no one anticipated.

For an enterprise, this is both beautiful and frightening. For CISOs, CFOs, and Chief Risk Officers, it’s mostly frightening. They will not sign off on a world where hundreds of ungoverned agents run loose across the organization.

And that is exactly why enterprises will buy software — not just to do the work, but to impose the structure, governance, and coherence that makes AI-powered work safe and reliable at organizational scale. The barbarians are not just at the gates. They are inside the building. And that is the problem enterprise software has always existed to solve.

The Answer: Packaging Complexity

Enterprises do not buy features. They buy solutions — and solutions require design, orchestration, governance, security, and accountability. Capability alone is not a deployable enterprise solution.

#1 Design addresses how humans and agents interact. It is paramount yet uncharted territory. SaaS codified enterprise processes into software. But one of the most profound changes coming with AI is that enterprises will move from buying automation to buying outcomes. Those who argue that workflow is dead are directionally correct, but incomplete. In its place, we will need well-designed agentic workflows that manage the handoffs between agents and humans — and those workflows will be reimagined from scratch around outcomes.

It’s early. Most startups are still wedging in with discrete task automation tackling the jobs to be done. That is smart for now and an easier sale to enterprises, but ultimately not enough. The saying “your mess for less” was common in Business Process Outsourcing (BPO) because BPO firms rarely re-designed and improved enterprise processes. Inserting Agent X for this task and Agent Y for that one, but doing the job the same way, will not create long-term winners.

Instead, AI startups need to begin with an outcome-design mindset. Consider commercial lending. A startup thinking in terms of task replacement would first gather all the borrower data, then have an agent automate the financial spreading and wait for a human to review, and finally have an agent create an underwriting memo and wait for a human to edit. A design built around outcomes will take advantage of the 24×7, scalable agent capabilities and create a real-time, iterative conversation with the borrower. Agents could request data as needed to qualify borrowers, show the borrowers how the lender will model their credit worthiness and match them with loan products, and allow the borrower to change variables like loan rate, points, or term. At any point, the borrower could request to speak with a loan officer, and when they do, the agents would prepare the loan officer with notes, recommendations, best next steps, etc. If this saves the loan officer 10 hours per loan, all of that can be spent on relationship building, cross-selling, and ensuring the customer gets the most value from the lender relationship.

Design will be the most durable form of domain advantage. General-purpose agents will probably, eventually learn every domain. An agent can learn the rules of commercial lending or insurance underwriting. But knowing the domain is not the same as knowing how to redesign the work. Designing the right interaction between agents and humans — where to automate, where to hand off, where to keep a human in the loop not for compliance but because it genuinely produces a better outcome — requires the kind of judgment that only comes from deep, sustained focus on a problem space. That’s not a knowledge gap. It’s a design gap.

Get the agent-human interaction design right, and you will have an early advantage that compounds. Reflecting on the birth of ecommerce, Amazon nailed the checkout flow while countless merchants had obtuse, high-friction checkouts. The underlying capability was the same, but Amazon’s design of the end-to-end experience created an early competitive advantage that compounded. Seems obvious now, but it was not at the time.

#2 Orchestration is about how agents work with each other. Any meaningful enterprise process involves multiple agents — one that extracts data, another that analyzes it, another that drafts a communication, another that checks compliance. Someone must decide the architecture: which agents manage the workflow, when to use specialized agents, and when to invoke a skill versus spinning up a separate agent. Think of it like staffing an investment team — you need a leader who knows when to pull in the tax expert, the industry analyst, or outside counsel, in what order, with what context passed along. Knowing the industry vertical and the function is critical to optimizing specialist agents vs. general-purpose agents, pre-built integrations vs. as-needed API calls, and agents vs. humans. All of this will affect cost, reliability, and speed.

#3 Governance means the policies, approvals, and controls that determine who can deploy an agent, what it is allowed to do, what happens when they are duplicative, how decisions are reviewed, and the imposition of security and data requirements. In financial services, a model that generates investment recommendations may need to be validated, documented, and approved before it goes live. Some payments may be initiated by agents; others require human approval. In healthcare, an agent might read the radiology report, and even provide the results, yet not have authority to order prescriptions.

#4 Security in the enterprise is table stakes. It means data isolation, access controls, audit trails, and compliance with industry-specific regulations like HIPAA, SOX, or GDPR. A general-purpose agent can be powerful, but an enterprise buyer needs to know exactly what data it can access, who can see its outputs, and how to prove that to a regulator. Security may not strongly favor vertical software over foundation model providers in all industries, but it can in industries with industry-specific regulations like healthcare, financial services, and public sector.

#5 Accountability means having a throat to choke. When an agent makes an error that costs money or creates risk, enterprises need a vendor who owns the outcome. They need SLAs, incident response, and a product team that understands the domain well enough to diagnose what went wrong. A general-purpose agent does not come with a customer success team that knows your industry.

The companies that package all this together — the design, orchestration, security, governance, and accountability — are building something that a general-purpose agent with plugins simply does not replicate. Packaging complexity is a real and enduring source of value.

Vertical Software Players Can Succeed, But the Race Is On

This case for packaging complexity IS the defense of software, and especially of vertical software that brings domain knowledge to every decision. However, it’s not yet clear how enterprises will buy and deploy all this needed governance, security, and accountability.

Three categories of players are competing for the enterprise AI stack.

Foundation model providers. Anthropic, OpenAI, Google are solidly individual productivity tools today, but the trajectory makes clear they are moving towards this orchestration layer. Anthropic shipped Cowork in January, added plugins two weeks later, then added enterprise connectors, private plugin marketplaces, and admin controls two weeks after that. And the February launch explicitly featured orchestration across Excel and PowerPoint — context flowing between tools, not just a human chatting with an agent. The pace is extraordinary and moving toward the orchestration layer. What keeps them from being the default orchestration layer – risk of model lock-in for one. But some buyers will accept that.

Purpose-built horizontal orchestration platforms. Stack AI, Thread AI, Copilot Studio posit the enterprise wants a single neutral orchestration layer across all business functions: visual workflow builders, multi-agent coordination, governance dashboards, deployment infrastructure. This looks a lot like how enterprises have bought for decades, and frankly it is hard to imagine not having some layer like this because enterprises need to manage complexity across all their functions.

Vertical software vendors — Harvey in legal, Fazeshift in accounts receivable, Abridge in healthcare — these kinds of players own the domain expertise, workflow design, and customer relationship for a specific function. This is the category that needs to change the most and get the packaging right to survive. The best will absorb security, governance, and orchestration into their own products because their customers will demand it.

Realistically, all three will find buyers in the enterprise along lines of size/scale and technical sophistication. JPMorgan will build a lot more software in-house than it did before because the cost of doing so will fall. They will absolutely have their own horizontal orchestration platform. Small and midsize companies like a community bank or domestic manufacturer will buy a lot of individual vertical software products and need orchestration built in.

These are genuinely open architectural questions. What I believe is that vertical vendors are best positioned to solve the hardest part: designing and packaging the domain-specific work that produces outcomes. Whether they build, buy, or integrate the horizontal infrastructure is a strategic question each will answer differently. But domain expertise comes first, and that is not something a horizontal platform or foundation model can easily replicate.

Conclusion

Making the case for vertical startups may look crazy right now. That’s fine. The foundation model providers are building governance, orchestration, and enterprise packaging at remarkable speed, and the window for startups is not infinite. But the high-probability scenario is that the greatest problem to be solved is the hard, domain-specific work of designing how agents and humans should interact, how agents work with each other, and how all of it operates safely within the constraints of a real enterprise. That work favors the focused over the general. The opportunity is decades long, but the window to establish yourself is right now.

The State of Fintech in 2026

It’s here! All subscribers to Fintech Prime Time can access the full 2026 State of Fintech report via the F-Prime Fintech Index.

But first, save your spot with the F-Prime team for a virtual presentation and discussion of our findings on Tuesday, February 24 at 12pm ET / 9am PT.

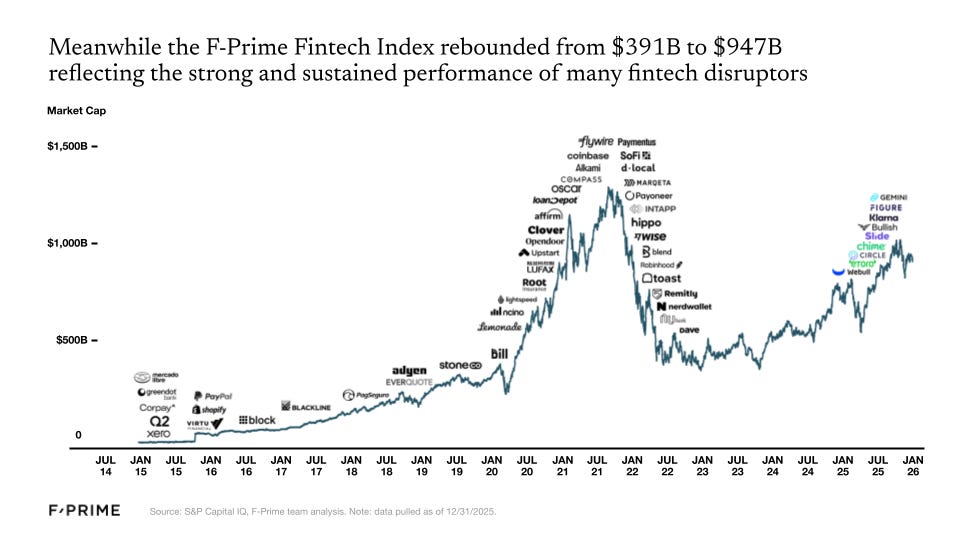

The fintech industry has experienced its ups and downs over the last five years. In 2021, the F-Prime Fintech Index market cap rose to $1.3T, followed by a swift correction in 2022 when the Index bottomed out below $400B. The effects of that correction lingered into 2023, but started a slow and steady rebound in 2024. By the end of 2025, the F-Prime Fintech Index was almost back to $1T.

At the same time, 2025 was the year we could definitively say three things. First, the fintech investments of the last decade have produced multiple new industry giants that lead in their respective categories — Nubank, Affirm, Stripe, Toast, and Robinhood, to name a few. Second, crypto has earned its seat next to traditional finance (TradFi). We expand on both these points in the State of Fintech report. Finally, 2025 was not the year of AI in financial services, at least relative to its early adoption in other industries and functions like coding, customer service, and legal. However, it is coming quickly and we anticipate future State of Fintech reports will show a lot more adoption.

The first months of 2026 brought sharper market discipline than many expected, eliminating over 80% of the Fintech Index market cap gain between year-end 2024 and 2025. Despite the Q1 2026 sell-off, we believe financial services providers will ultimately benefit more from AI than be disrupted by it. The outlook is less forgiving for legacy technology vendors serving financial institutions, many of whom risk being displaced by native agentic architectures. For now, however, public markets appear to be painting the sector with a broad brush.

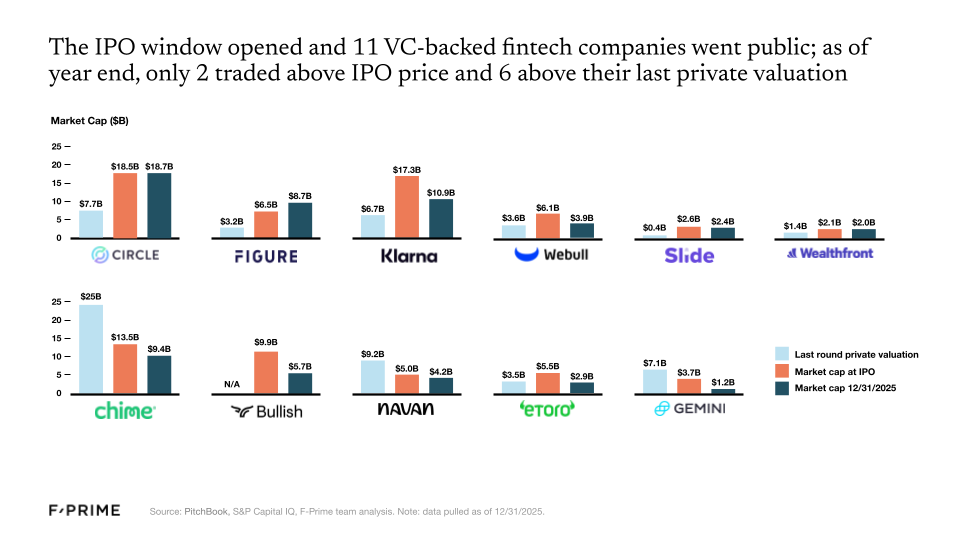

A Thaw in Public Fintech Markets

16 fintech companies went public in 2025, 11 of which were VC-backed. Despite subpar performance for many of these companies in the public markets (as of 12/31/2025 only two traded above their IPO price, and six traded above their last private round valuation), the IPO window is officially open. More public listings are on their way — already three more in 2026!. Meanwhile, fintech M&As are showing even greater signs of health, rebounding to pre-2021 levels.

Revenue multiples also continue to rise — over the last two years, investors have prioritized so-called “goldilocks” companies that are neither growing too fast nor too slow while approaching profitability. As for the companies comprising the F-Prime Fintech Index, fundamentals continue to strengthen. They grew at an average of 29% over the last year, with every sector seeing meaningful increases in net income margins since the growth-at-all-costs mindset that characterized the 2021 peak.

A New Generation of Financial Services Giants

The last 15 years have produced new industry heavyweights. Much like Uber, PayPal, and Square were initially dismissed yet came to lead their respective industries, so too have companies like Nubank, Affirm, Stripe, Toast, and Robinhood become leaders in theirs.

If measured against US standards, Revolut, SoFi, and Nubank would now rank in the top 1.5% of American banks if they were chartered in the US. Each has nearly $30B in deposits. In payments, Stripe and Adyen were tied for fifth place in the list of top global merchant acquirers, each with around $1.4T in TPV, while Toast processes an estimated 15% of the restaurant industry’s payment volume.

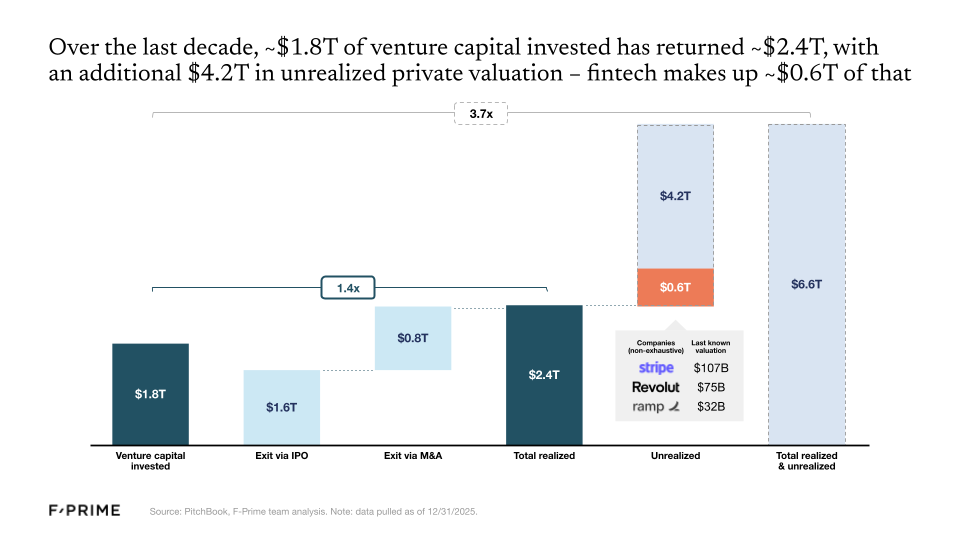

So the fintech wave of the 2010s has now officially produced its first generation of giants, but there are many others still waiting in the wings. Roughly $1.8T of venture capital has been invested in the category over the last decade, returning an estimated $2.4T. But $4.2T remains locked up in innovative private companies, with fintech making up around $0.6T of that total, including some of the most valuable fintech companies like Stripe ($107B), Revolut ($75B), and Ramp ($32B).

Crypto Grows Up

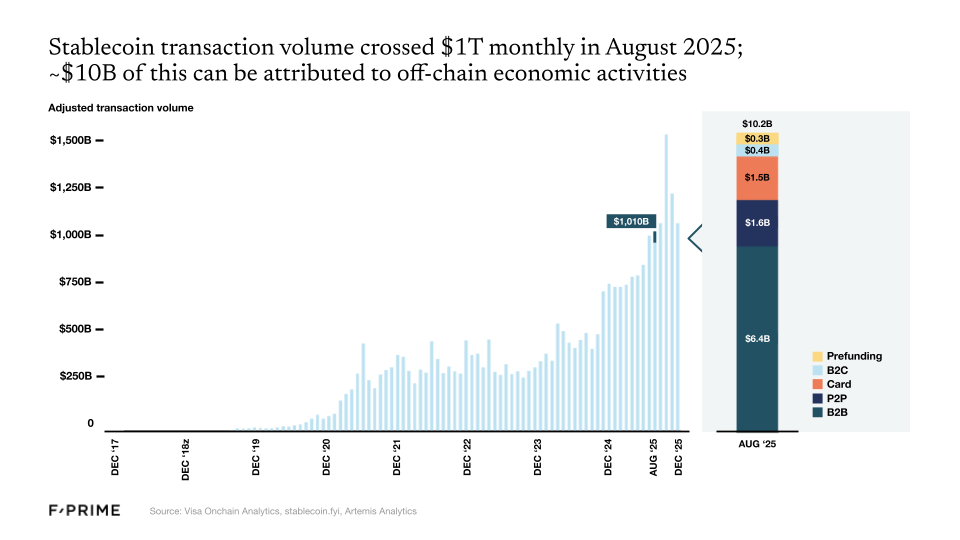

As of 2025, we can officially say that the crypto industry has earned a front-row seat alongside TradFi, crossing a number of thresholds that show real integration with the broader economy. For starters, issuers like Blackrock and Fidelity contributed to a total of more than 75 new crypto ETFs launched in 2025. This marks a structural shift in the makeup of the crypto market. At the same time, regulators’ posture towards crypto meaningfully shifted in 2025, paving the way for further institutional adoption moving forward.

And then there are stablecoins, which crossed $1T in monthly volume in 2025. Stablecoins may be the best example of a “killer use case” in crypto. Stablecoins could reduce the cost of remitting $200 from $20-30 via bank transfer to less than $1.

Following the initial adoption of stablecoins and tokenized treasuries, we can now wonder whether any financial asset will not be tokenized in the next 10 years. The next few years will see an expansion of tokenization across a wider spectrum of asset classes, including real estate, private credit, and other private funds.

AI Has Not Transformed Fintech (Yet)

There has been a lot of hype, but 2025 was not the year of AI in fintech. For now it remains a huge, mostly untapped opportunity — financial services is responsible for more than 20% of GDP in the US, but the industry currently has one of the lowest adoption rates for AI agents.

We knew that financial services would lag behind other industries, and for good reason. Accelerated AI adoption works for industries where:

- Context is text-heavy instead of numbers-heavy,

- Existing systems of record are easy to integrate with,

- Stakes are relatively low and imprecise values are still valuable, and

- There is low regulatory exposure.

Financial services strike out on most of these points.

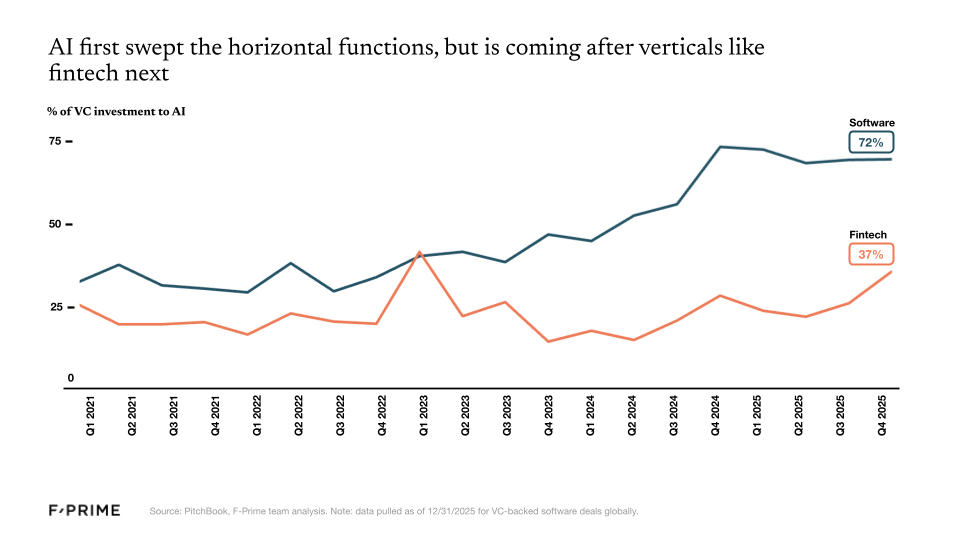

In the broader enterprise software space, nearly three quarters of every dollar invested now goes to AI companies. In the fintech vertical, that number is closer to one third. Since the launch of ChatGPT, fintech has produced a lower percentage of unicorn companies, and those that reach unicorn status are usually not AI-native.

However, we know that financial services is a worthy vertical for AI to tackle. The large models are already building for financial services — OpenAI in payments, Anthropic in financial research — but we believe startups can differentiate on workflow, integrations, and domain knowledge.

By the end of 2025, the primitives for nearly every sector of fintech have been put in place, and they are now ready for a new AI-native application layer to be built on top. We expect the coming years to be exciting and critical ones for AI in financial services and commerce, and it’s time to put the next generation of building blocks in place.

We’ve Never Been More Excited for the Future of Financial Services

If you’re as passionate about fintech as we are, there are so many reasons for excitement.

The regulatory landscape has never been more open to crypto innovation and adoption, and stablecoins are revolutionizing the way money flows around the world. Crypto ETFs are unlocking new pools of capital, and tokenization promises to create a more efficient infrastructure for all asset classes.

It’s still early days for AI in fintech, but the technology is already redesigning the way financial services businesses underwrite risk, design products, allocate capital, and serve their customers. And that’s before we consider AI’s role in determining how consumers earn and save, spend and pay, borrow and build wealth.

The last decade forged the next generation of great financial services companies, and AI is going to create the next.

Go deeper: Access the full report via the F-Prime Fintech Index here.

The Uncomfortable Truth About FDEs

Forward-deployed engineers (FDEs) are having a moment. Whether called “agent engineering” at Sierra, “customer engineering” at Shield, solution and sales engineering elsewhere, or their original name, “Delta”, they sit at the intersection of sales, engineering, and customer success, translating real-world complexity into product insight.

As AI products collide with messy enterprise reality – legacy systems, ambiguous workflows, and proprietary data – companies are rediscovering a Palantir-era tactic: embed engineers directly with customers to make the product actually work.

The appeal is obvious. FDEs compress sales cycles and bridge the gap between elegant demos and operational reality (hence their original name “Delta”), but they are a double-edged sword. At best, they create a tight feedback loop between the customer and product, but if done wrong, they can quietly transform software companies into bespoke service firms with bloated CAC, fragile margins, and roadmaps dictated by their loudest customers.

The question is not whether to build an FDE team, but how to design one without undermining the very economics that make software valuable.

FDEs are most effective when the product is powerful but the “last mile” is highly contextual. This describes the industry standard in AI and data infrastructure, where value depends on wiring into proprietary workflows and compliance constraints that no roadmap can fully anticipate.

They are also vital in design-partner markets. When early customers effectively co-create the product, FDEs become the fastest feedback loop between reality and code. In competitive markets, the second-best product with strong FDE support often outperforms the technically superior product that customers cannot operationalize.

- The Danger Zone: FDEs become toxic when custom work becomes the default. If every deal requires bespoke engineering, you don’t have a product; you have a consultancy with a logo. When “we’ll just throw an FDE at it” becomes an organizational reflex, product debt accumulates silently. Customers outsource their thinking to your engineers, and you inherit their complexity.

- Rule of Thumb: If more than 30-40% of deployments require significant FDE effort, the problem is no longer go-to-market. It’s product design.

The central tension here is economic. FDEs create real cost, but professional services revenue hurts valuation multiples.

- The Services Model: Billing time-and-materials keeps margins clean but dilutes valuation. What’s worse is that customers anchor on hourly rates rather than product value.

- The Bundled Model: Bundle FDE costs into the subscription price. It preserves “software-only” optics and simplifies procurement. It’s the pragmatic choice in early stages. However, it inflates subscription pricing and obscures the true drivers of CAC and gross margin.

The Solution: A milestone-based embedded model. FDE support is included in the deal but tied to defined milestones (e.g., “successful deployment”) rather than open-ended engagement. Embedding must be time-bound — usually three to six months. If customers cannot graduate from FDEs, the product is not ready.

For companies offering FDEs, financial planning and analysis are usually more troublesome than the actual engineering.

The uncomfortable truth is that FDEs often make metrics look better externally, but worse internally.

- Revenue: Only software counts as ARR. FDE revenue should be internally unbundled, even if external reporting lumps it together.

- CAC vs. COGS: Pre-sales FDE work is CAC. If you don’t track this, you will drastically overestimate your GTM efficiency. Post-sales work is Services COGS.

Finance teams must enforce an honest distinction between software margins and deployment margins. If FDEs are essential to closing every deal, your product is not yet self-serve at the enterprise level, and your P&L should reflect that.

The legal stance must be absolute: The company retains full IP ownership of all FDE work. Customers get a royalty-free license, but reusable components must flow back into the core product, not remain trapped in customer-specific forks.

Where FDEs actually sit is equally consequential:

- Reporting to Engineering: Better code quality, weaker revenue alignment.

- Reporting to Sales: Higher responsiveness, but a high risk of “short-term hacks” that create technical debt.

Best Practice: A dual-reporting model where FDEs sit within a Customer Engineering org but maintain a dotted line to Product. Crucially, rotate FDEs between customer sites and core development. This prevents “maintenance mode” burnout and ensures the FDE team doesn’t drift into a consulting mindset.

Forward-deployed engineers embody your product strategy, and your comp plan will dictate their behavior.

- The Sales Model: Paying FDEs commissions on closed deals encourages them to optimize for immediacy. Custom solutions multiply, and the company scales exceptions rather than a platform.

- The Core Model: Paying high base salaries with no variable component produces clean code but low urgency. Architectural purity takes precedence over customer timelines.

The companies that win must reject both extremes. They pay FDEs at engineering levels (read: equity-heavy) but introduce a restrained variable component tied to outcomes that signal maturity: successful deployment, retention, and the conversion of custom work into core product capabilities.

- The Activator (e.g., Sierra): In AI platforms, FDEs act as “agent product managers,” translating enterprise complexity into deployable systems. This is powerful but fragile, and must therefore be temporary.

- The Integrator (e.g., Ramp): In fintech, FDEs bridge the gap between modern software and legacy ERPs, banks, and internal tech stacks. They are the difference between a mid-market deal and a multi-million-dollar enterprise contract, provided they don’t let big customers hijack the roadmap.

- The Infrastructure (e.g., Palantir): When every customer requires embedded engineers forever, product velocity dies. Palantir built a giant business this way, but they operate in a market with extreme switching costs and existential stakes. Most startups do not have that luxury.

Many startups today use FDEs to compensate for immature products, unclear positioning, weak onboarding, missing integrations, and unrealistic enterprise promises.

In the ideal model, FDEs feed R&D. Each deployment generates insight into data schemas, workflows, edge cases, and constraints. Those insights become reusable features. If three FDEs solve the same problem, the solution becomes a native capability.

The real question is not whether to build an FDE team. It is how long you plan to depend on one.

FDEs are a mirror. They reveal the gap between what your product promises and what customers actually need. The companies that win treat FDEs as scaffolding – never as architecture.

From Text To Tables: Why Structured Data Is AI’s Next $600 Billion Frontier

Thanks to Chance Mathisen for his contribution.

In the current wave of generative AI innovation, industries that live in documents and text — legal, healthcare, customer support, sales, marketing — have been riding the crest. The technology transformed legal workflows overnight, and companies like Harvey and OpenEvidence scaled to roughly $100 million in ARR in just three years. Customer support followed closely behind, with AI-native players automating resolution, summarization, and agent workflows at unprecedented speed.

But industries built on structured data have not been as quick to adopt genAI. In financial services, insurance, and industrials, AI teams still stitch together thousands of task-specific machine learning models — each with its own data pipeline, feature engineering, monitoring, retraining schedule, and failure modes. These industries require a general-purpose primitive for structured data, an LLM-equivalent for rows and tables instead of sentences and paragraphs.

We believe that primitive is now emerging: tabular foundation models. And they represent a major opportunity for industries sitting on massive databases of structured, siloed, and confidential data.

LLMs use attention mechanisms to understand relationships between words, and simultaneously capture context, nuance, and meaning across sentences and entire documents. As these models scaled, an unprecedented supply of freely available text across the internet provided trillions of tokens that taught them how language works across domains, styles, and use cases. Models that could read, write, summarize, and reason over text suddenly became everyday business tools — drafting emails, answering tickets, and redlining contracts in seconds.

Entrepreneurs quickly recognized the pattern: plug into a foundation model’s API, wrap it in a vertical interface, solve a painful workflow, and sell seats to high-value knowledge workers. Thousands of AI-native startups followed, forming a virtuous cycle: application companies drove demand, foundation model providers reinvested in better capabilities, and improved models enabled even more powerful applications. Domain by domain, LLMs devoured unstructured data wherever it lived.

But LLMs were trained on text, not tables. When asked to work with structured data, they flatten spreadsheets into token sequences and strip away the meaning encoded in schemas, column relationships, data types, and numerical semantics.

The typical workaround is indirect. The model generates SQL or Python, hands it off to an external system for execution, and hopes the result is correct. This works for simple queries, but breaks down quickly. A single ambiguous column name — “revenue” versus “revenue_id” — can derail an entire analysis or forecast.

This problem compounds in large enterprises. Years of tech debt, acquisitions, and mergers leave behind dozens of siloed and brittle systems. Current LLMs and agents have greatly improved, but they still can’t confidently understand and manipulate an organization’s data which lives across different ERPs, CRMs, data warehouses, and spreadsheets. A single query can force an agent to join tables that were never designed to fit together, built by teams that no longer operate.

As a result, high-stakes sectors like financial services and healthcare remain anchored to their trusted (and sprawling) stacks of traditional ML models. Startups have built agents that write Excel formulas or execute Python notebooks via natural language, but when it comes to actuarial-level accuracy, large-scale forecasting, or multi-table reasoning that drives million-dollar decisions, the heavy lifting still falls to libraries like XGBoost and LightGBM.

LLMs can interact with structured data, but they are not the right engine to model it.

Structured datasets require a foundation model built natively for structured data. It must understand schemas, column relationships, and numerical semantics from the ground up, rather than treating tables as flattened text.

The market opportunity here is staggering. The global data analytics market is projected to exceed $600 billion by 2030, but the industries most reliant on structured data — financial services, insurance, and healthcare — represent trillions in market cap that have yet to fully leverage generative AI.

Tabular foundation models may be the key required to unlock that TAM for startups. TFMs are trained to reason over rows and columns the way LLMs reason over sentences and pages. They deliver state-of-the-art predictions across classification, regression, and time-series tasks in seconds rather than hours.

Unlike traditional machine learning, TFMs can work with messy, heterogeneous data out of the box. They can deal with missing values, inconsistent formats, and ambiguous column names with no feature engineering, no model selection, and no hyperparameter tuning required.

A new generation of companies is building in this space, including Rowspace, Prior Labs, Fundamental, Intelligible AI, Kumo AI, Neuralk AI, Avra AI, Wood Wide AI, each exploring different architectural approaches to representing tabular and relational data, learning cross-column dependencies, and generalizing across tasks.

The operational implications of TFM are profound. Rather than maintaining a fragmented portfolio of brittle, task-specific models, enterprises can consolidate around a single foundation that generalizes across use cases. This would dramatically reduce the cost and complexity of building, monitoring, and retraining models.

But there are also real risks for startups building in this space. As LLMs get better at coding, some argue that generating analysis scripts on the fly could eliminate the need for specialized tabular models altogether. Open-source pressure may also compress technical differentiation, as happened with now-commoditized image models.

This makes distribution and business models critical. Technical advantage alone will not be durable. TFMs must be embedded into enterprise workflows, sold with clear ROI, and priced in ways that reflect the value of reliability and reduced operational overhead — before the shelf life of the technology advantage expires.

For industries where AI adoption has lagged, TFMs offer a reset. Use cases that once required months of data science work — custom pipelines, bespoke features, continuous retraining — can now be tackled with a single, general-purpose model that delivers reliable results out of the box.

In healthcare, that means patient risk stratification and diagnostic prediction.

In financial services, credit decisioning and fraud detection.

In insurance, claims triage and pricing optimization.

In manufacturing, predictive maintenance and demand forecasting.

These problems have been addressed with traditional ML for years — but never with the speed, flexibility, or scalability that a foundation model enables.

For founders, this is a greenfield opportunity. Just as LLMs unlocked a wave of AI-native companies built on text, TFMs open the door to startups tackling structured-data problems that were previously too slow, too expensive, or too complex to solve at scale. As investors with a long history of investing in infrastructure and applications that power financial services, healthcare, and regulated industries, we believe tabular foundation models represent the next major opportunity to unlock AI adoption in these industries. If you’re working on tabular foundation models, building applications on top of them, or tackling structured-data problems in those industries, we’d love to hear from you.

How much labor spend will AI capture? A lot, but not as much as the headlines suggest.

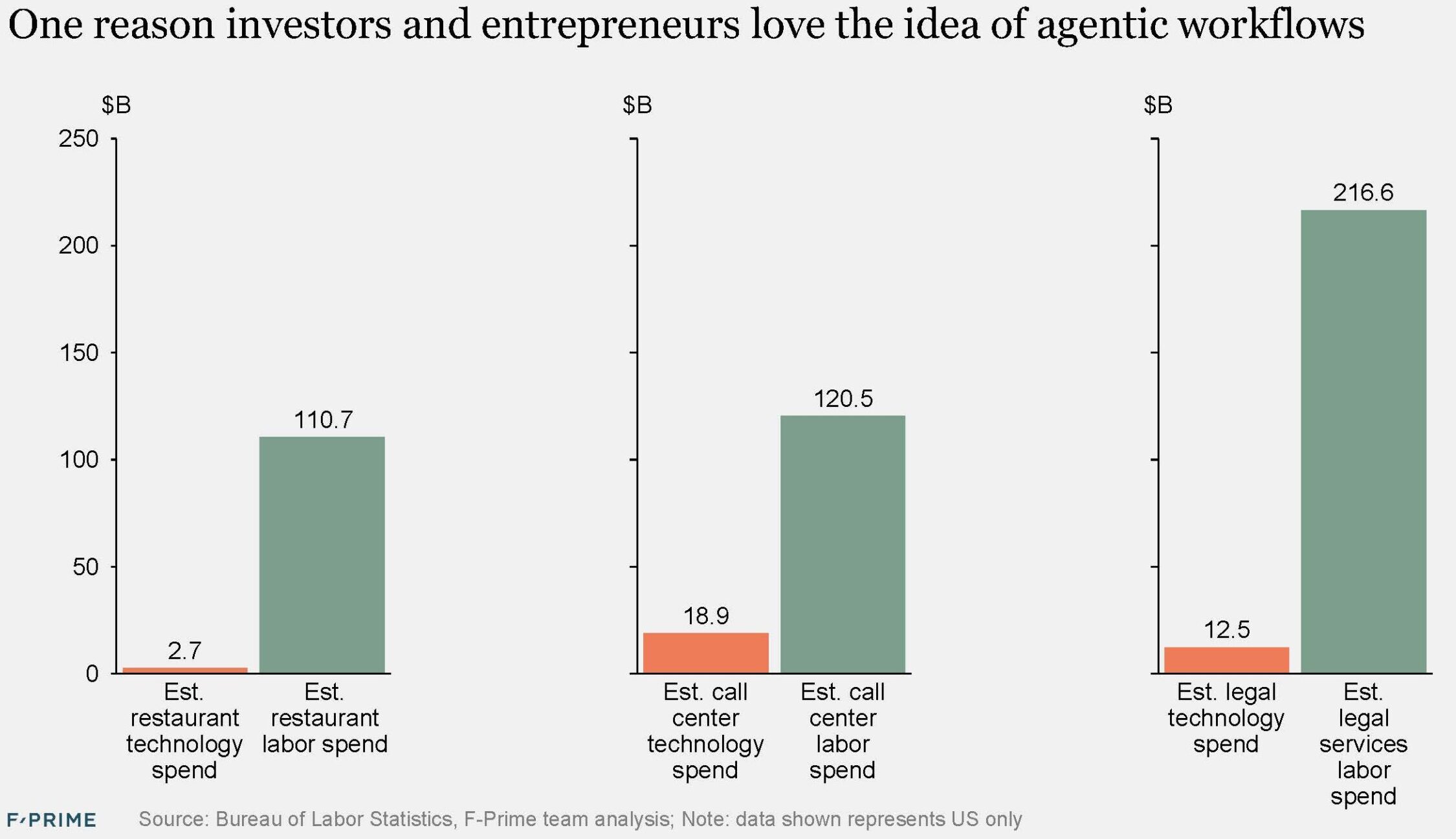

A core tenet has emerged that the AI opportunity is much larger than SaaS because it is going after labor spend which is 10-30x larger. At the headline level, this is undeniably true in almost every industry.

However, over the last three years we have started to see how much labor spend AI can actually capture. TLDR: It’s a lot less than the headlines, but still a large expansion from SaaS. I anticipate software spend will increase 2-3x with the addition of agentic workflows.

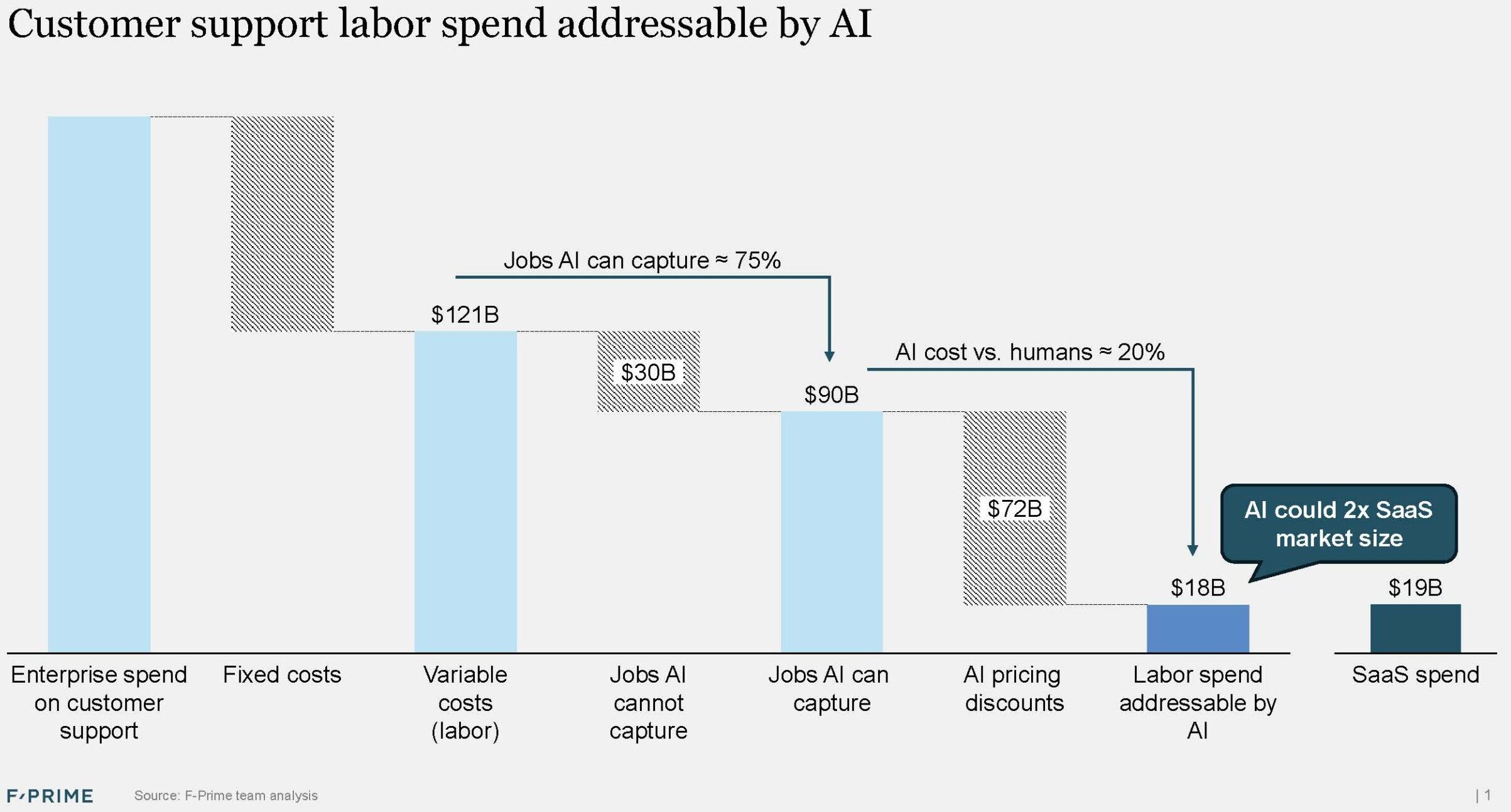

The answer will vary a lot by industry, but I am using this framework for sizing the AI market opportunity. I will illustrate it with customer support data, one of the earliest adopters of AI in the enterprise.

There are three main drivers.

#1 Fixed vs. variable costs. Call centers will continue to have management teams that hire and manage employees, procure technology, analyze data, and make decisions. Of a total customer support budget, it is typical to see 40% fixed costs, leaving 60% variable human costs doing the actual work of customer support.

% of jobs that AI can handle. This number will steadily rise as AI gets better and enterprises customize agentic workflows to their specific needs; however, it’s not going to be 100% of all customer support interactions for many reasons – one-off or highly complex support needs, enterprise unwillingness to integrate AI agents with high-risk systems like payments or prescription ordering, etc. However, out of the gate, we have seen AI handle 50% of chats and emails (less of voice calls), encouraging enterprises to target 75% deflection of support from live humans. It’s impossible to know where this settles, but 75% is possible, if optimistic. Over a long enough horizon, I will bet on AI’s inexorable improvement.

AI cost vs. humans. It is fascinating to see AI vendors pricing AI agents at 10-20% of their comparable unit of labor replacement. For example, it costs many companies $5-10 per customer support interaction (variable only), but AI vendors like Sierra, Decagon, and Maven often charge ~$1. That is 80-90% variable spend reduction for enterprises…and reduced market size for AI vendors. To be sure, as companies grow, their customer support interactions gr ow, and so will the AI market opportunity, but all things equal, aggressive AI pricing deflates the market size.

In summary, there might be 10-30x more labor spend than SaaS today, but it is probable that only 10-20% of that is accessible to AI. That is better news for people worried about losing jobs to AI, but worse news for investors hoping for a larger market opportunity. In the end, there are many ways AI could capture more labor spend, and even take spend from SaaS, so this framework will evolve. We will all learn together.

Behind the Breakthrough: Q&A with Kai Eberhardt, CEO and Co-founder of Oviva

Kai Eberhardt transformed a personal cancer diagnosis in his twenties into a lifelong commitment to improving patient empowerment and healthcare accessibility.

Diagnosed with cancer in his early twenties, Kai Eberhardt quickly learned how disheartening it can feel to navigate the healthcare system without information or agency. That experience became a transformational force, first pushing him toward deeper medical knowledge, then through a PhD in medical physics, and ultimately into the business of healthcare.

He co-founded Oviva in 2014 with engineer Manuel Baumann to confront one of the most widespread, but underserved, health challenges in society: chronic weight-related conditions (such as obesity and type 2 diabetes). Despite the abundance of clinical evidence showing that behavior change and lifestyle interventions can be highly effective, few systems were designed to deliver them at scale, and even fewer offered sustained, patient-centric care accessible to everyday lives.

Eberhardt and his team saw an opportunity to reimagine care delivery, starting with something simple: a secure, compliant chat app connecting patients and their care teams. Over time, that communication layer evolved into Oviva’s full-stack digital care platform, now used by more than one million patients across the UK and Europe.

On the heels of Oviva’s expansion into cardio-metabolic conditions, and after nearly a decade of building credibility and capability in systems like the National Health Service (NHS), Eberhardt shares what it takes to turn frustration into innovation, how the company is scaling with purpose, and why technology is only one part of the solution.

What gap in the healthcare system were you aiming to address in founding Oviva?

The idea for Oviva emerged from a common challenge in obesity treatment—most patients don’t continue treatment after one or two visits. It just isn’t practical for patients to regularly attend sessions in-person despite a demand for care.

What stood out was that these same patients were always on their phones, and unlike other areas of care, weight management doesn’t require physical exams, lab work, or imaging. It largely includes education, coaching, and real-time support. So, we asked: what if we digitized the same care that we provided in-person and delivered it on their phones, anytime, anywhere? That would make it dramatically more accessible, and likely more effective, too.

Can you talk more about how this model helps address affordability and equity?

People managing chronic conditions often juggle jobs, childcare, daily stress – and weight-related health is important, but not always urgent. That makes it easy to de-prioritize care, especially when it requires a visit to a doctor’s office on a random Wednesday afternoon.

Making care available on your phone, on your own schedule, changes everything. For example, look at the NHS Diabetes Prevention Programme – about 20% of people completed the in-person model, but closer to 70% completed Oviva’s digital version. That’s a massive difference.

Virtual care also opens the door to serving culturally and linguistically diverse communities. With digital delivery, you can tailor the content, language, and nutrition guidance for many different patients. Curating care is almost impossible to do well in a one-size-fits-all, in-person group setting.

You integrate clinical, nutritional, and psychological care. What makes that approach so essential?

Obesity is multifactorial – you just can’t treat it through one lens. Some people need help with nutrition education, some have complex psychological patterns or trauma, and now we also have powerful medications that should be managed by doctors. No one discipline can cover it all.

Not every patient needs every service, but having a full stack available is essential to delivering effective care. We learned this from the best in-person programs –where coordination across teams made all the difference, though it was resource-intensive and hard to sustain. By operating digitally, we can bring those same multidisciplinary perspectives together without the limits of geography or scheduling.

What makes Oviva truly different from other players in your space?

We’re with our patients every day. That’s the biggest difference. Face-to-face models might give you 30 minutes with a clinician once a month. We’re a daily companion – logging meals, giving feedback, coaching, and support throughout the day. That consistency leads to better outcomes.

We’ve published more than 90 papers showing that we outperform in-person care, and because we’re digital, we can do it at lower cost and with broader reach. We’re essentially industrializing something that used to be artisanal – making personalized, behavior-change therapy highly scalable.

Regarding Oviva’s role within the NHS – what does it take to build innovation and credibility in a system as rigorous and complex as that one?

Evidence, first and foremost. I’ve always believed in backing up what we do with strong data, while publishing results publicly to build trust and demonstrate transparency.

After that, it’s about communication – having the skills and patience to speak to very different stakeholders across the NHS. And finally, it’s about partnership. We don’t try to replace services; we instead think about how we can add value to the system through better access and efficiency. This mindset helps us prove we’re here for the long haul.

You have talked about being driven by your own personal experiences in the healthcare space. Can you share how that energy helped shape your journey as a founder?

I’ve always been a pretty intense and action-oriented person. Frustration, for me, serves as a powerful motivator because it offers clarity and urgency. I don’t sit still when I see something broken. I’m not afraid to make decisions or move fast. I think that drive helped me do something many would consider irrational – starting a health tech company from the ground up in a pretty complex space.

Obesity is a field that often carries judgment or stigma. How do you lead with compassion and evidence in that environment?

Honestly, that’s one of the most fulfilling parts of what we do. Many of our patients haven’t received good care before – they’ve been judged or dismissed by the system. When we help them see real progress, it’s incredibly rewarding.

It’s not just for the patient’s benefit either. We’ve shown, with data, that our program reduces patient sick days by about a third within six months. That translates to added productivity in the workplace, tax revenue, and long-term cost savings – things that help the entire system. So, when people ask if this population is “worth investing in,” our results make the answer abundantly clear.

What advice would you give to other founders trying to build something in or alongside a public health system?

You need grit. It takes a long time to get through validation, adoption, and scaling inside a system like the NHS. The process can be very frustrating, especially when you know your solution could help people immediately, but adoption takes time.

Some delays are for good reasons, like needing strong evidence. Other delays are due to competing interests or systemic inertia. You must keep showing up and pushing forward. The reward is that once you’re in, and your model works, it’s incredibly sticky and impactful.

What excites you the most about what’s coming next?

We’re about to launch our hypertension solution, pending final regulatory approvals. It’s been in the works for two years and is a huge opportunity to build something that serves both patients and doctors more effectively – especially in how we manage data, daily insights, and ongoing support between visits.

The role of AI in all of this is just getting started. Our AI-first care model has the potential to transform patient support, making delivery more efficient and effective. We can provide even better continuity of care between doctor visits and better inform doctors for those visits. Since the ChatGPT moment, we’ve been embedding more AI features into our product, making care more scalable and improving outcomes. AI technology and Oviva are evolving rapidly – and I can’t wait to see how far we can go.

Robotics on the Rise: The State of Robotics Investment in 2025

Updating our annual report.

We had the opportunity to provide a mid-year update on our State of Robotics report at RoboBusiness 2025. The buzz at the conference was palpable, as this year is proving to be an incredible year for robotics. The market is hitting an inflection point with investment on pace to hit record highs, public and private market valuations growing rapidly, exits accelerating, and innovation continuing to offer transformative opportunity. The future of robotics is more exciting than ever!

We invite you to download the report here, and reach out to authors Sanjay Aggarwal and Betsy Mulé.